Swati Gupta’s passion is finding connections that transcend boundaries. Central to her work, she looks at a problem from as many different angles as possible--applying methodologies and techniques from a range of disciplines--to find new insights and relationships. “A professor of mine once told me that often the extremely important papers in a field are those that discover latent connections between two different areas,” she says. “Drawing these connections has always been exciting to me.”

For instance, what do competing search engines, governments trying to catch smugglers, and the battles in Game of Thrones have in common? For Swati, it is that within each scenario you can find a two-player zero-sum game (games in which one player winning means the other must lose) with a vast number of playing strategies from which to choose.

To get a sense of this, think of rock-paper-scissors but using actual rocks, paper, and scissors. It is easy to find the optimal playing strategy if each of these is available in limited quantity. However, if there are more rocks, paper, and scissors available than you can count in any reasonable amount of time, how do you decide the best play to make? It’s a complex question that can be approached using powerful mathematical techniques. “We solve these games taking ideas from combinatorial optimization, convex optimization, and online learning,” says Swati. “It’s fascinating to see how these all give us different insights and bring in a multitude of applications.”

Going back to the Game of Thrones example, imagine a battle is about to take place in King’s Landing. Within King’s Landing there are key strategic points that the protecting army must guard, and it is these same points that the invading army will try to reach. In devising their strategy, the protecting army will try to predict the invading army’s route and position themselves to intersect it as much as possible. Conversely, the invading army will try to predict the route that minimizes intersection, keeping them away from the protecting army as much as possible. What is the best strategy for each army to use? In her research, Swati frames this as an example of a two-player zero-sum game, where each army is considered a player.

Another type of problem that can be solved within the two-player zero-sum game framework is dueling algorithms. For instance, consider two search algorithms that rank web pages, like Google or Bing. Suppose that for a given phrase one search engine, Search Engine A, has developed a probability distribution that represents the fraction of users looking for the resulting pages. In this example we’ll use the phrase “famous artists” and say there is a probability that 20 percent of people are looking for Picasso in their search results, 15 percent for Dali, and so forth. To maximize customer satisfaction, Search Engine A would provide a greedy ranking of pages, such that the most popular (Picasso, in this case) shows up at the top of the results. What happens, though, if we add a competing search engine, Search Engine B, to the mix? What strategy might Search Engine B use to attract more customers than Search Engine A? With knowledge of the probability distributions, Search Engine B could remove the most popular search result knowing that Search Engine A will get that 20 percent of the customers. However, by moving up all of the other results (with Dali at the top, now) Search Engine B will get the remaining 80 percent. Swati views this part of her work with game theory as a foray into analyzing competing algorithm scenarios. Such scenarios come up not just in search engines but in many things we use in our day-to-day lives, like recommendation systems (e.g. Yelp) and routing applications (e.g. Waze).

Once she has set these problems up as two-player zero-sum games, Swati uses a range of methods to solve them. Two related techniques are multiplicative weights update (MWU) and mirror descent. Both are online learning algorithms, meaning that a question is answered (What is the best route, given that we don’t know the actual congestion?) using knowledge of previous outcomes (Main Street has heavy traffic during rush hour, so getting home this way takes longer than it might), as that knowledge is acquired. (We used Main Street on Monday but now that we know it can be congested at peak hours, on Tuesday let’s try Pine Street. Even though the distance may be longer, it could take less time). Here, the connection with games is that any online learning algorithm (with some nice properties) can be used to help both players learn the optimal strategies for two-player zero-sum games.

In the MWU method, the weights of different actions are updated each time a scenario is run, yielding a probability set of increasingly optimal solutions. In the example above, the decision to try Pine Street might have come about because Monday’s information indicated a lot of congestion, and so the weight of “Main street” (higher delay due to traffic) was reduced relative to the weight of “Pine Street” (lower delay due to distance). In her research, Swati shows how to update these weights in a reasonable amount of time even if the number of actions is very large.

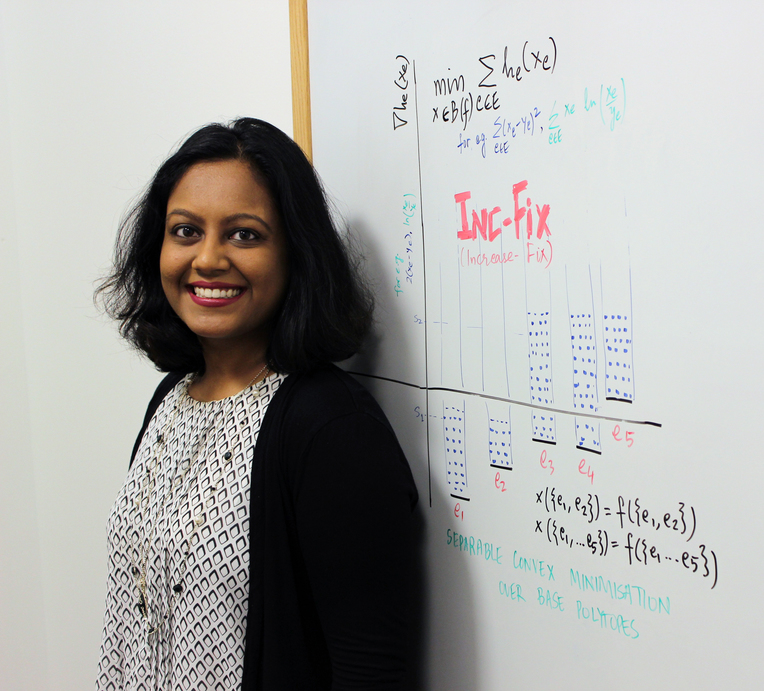

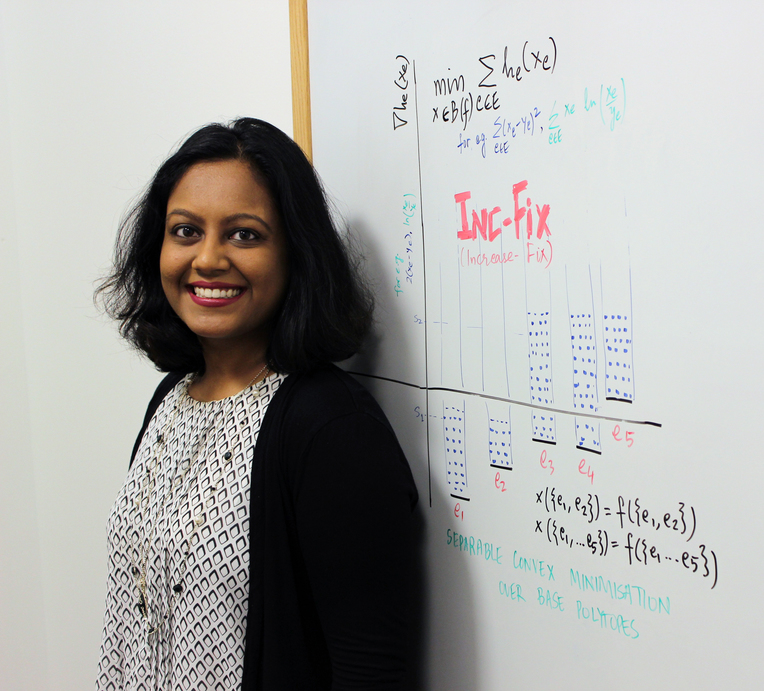

MWU is a special case of a more general optimization method called online mirror descent, which is also an iterative learning algorithm. You begin with a point inside the set of feasible decisions that represents one possible play. You make the play, observe your losses, and modify your strategy in a direction that would decrease the losses, with the hope that a new and improved point in the decision set is found. However, it’s possible that this move puts you outside the bounds of the decision set. Calculating the shortest path back to a point in the decision set that makes the most sense (i.e. is closest to the point representing the optimal strategy) is now a convex minimization problem. Swati developed an algorithm, Inc-Fix, that can solve this optimization problem for a general class of decision sets (called submodular base polytopes).

The Inc-Fix algorithm can be visualized by graphing gradient values against corresponding building blocks (elements) of the strategies. You begin by increasing the elements with the lowest gradient value until you hit a tight constraint (a boundary in the decision set) then you fix the elements that are tight and continue increasing the rest. “I really like this visualization,” says Swati. “You can think of it like filling water in a connected series of containers placed at different heights.”

"Approaching the problem of solving games via online learning led us to develop new insights that improve the state of the art of online learning. Getting deeper into the algorithm of mirror descent led us to develop Inc-Fix to do convex minimization,” says Swati. “This added more applications that we could have an impact on, some of which are in machine learning, some of which I‘m still discovering. And that is exciting to me."

Gracious, energetic, and quick to smile, Swati came to MIT after getting her bachelors and masters degrees in Computer Science and Engineering at the Indian Institute of Technology Delhi. “I’m from a very academic family, full of engineers and professors! Debating and puzzle-solving has been a part of growing up. I also love doing anything and everything creative - and research lets one be as creative as one can be. So, I want to do this for the rest of my life!” she says. She was drawn to MIT by its immense intellectual energy and its collaborative environment. Apart from her advisors, professors Michel Goemans and Patrick Jaillet, Swati has collaborated with a number of people at MIT including professors Dimitris Bertsimas and Georgia Perakis on robust inventory routing and dynamic pricing problems. (She has a dual affiliation with MIT’s Operations Research Center and LIDS.)

She recalls that while working with Michel, he once told her, “I need you to be critical of every step in the proof.” This idea of applying critical thought to her work is something Swati carries over into other areas of her life, as well, including her many creative projects. Perhaps the most notable of these is her panoramic photography, which she does using using her iPhone. Instead of remaining stationary while moving the camera she realized that to get a panoramic shot all she needed was relative motion. This meant she could be moving while the phone was stationary. “I started doing these experiments in 2013. I was on a high-speed train from Zurich to Paris and I put my phone on the window of the train,” she says. “There were amazing effects in the pictures because of the way the iPhone’s stitching algorithm works!” With encouragement from Martin Demaine (the Angelika and Barton Weber Artist-in-Residence in the Department of Electrical Engineering and Computer Science at MIT), Swati continued her panorama experiments, and eventually discovered she could use this feature to stitch together different views of a person’s face to fascinating effect, which led to a self-portrait studio titled Panoramia that is now being exhibited at the MIT Museum. (Swati is quick to acknowledge the MIT Museum Studio for their help in developing the project.)

Swati approaches her outside interests - photography, ambigrams (a way of drawing words so that they keep a meaning even when viewed upside down), and gender equity work - the same way she approaches her research: with drive, curiosity, and a focus on connection. “It’s been an adventure going from combinatorial optimization to game theory to online learning to convex optimization and discovering applications in different fields,” she says. It’s an adventure she looks forward to continuing for years to come.